How to Redact PII in Non-English Call Recordings

by Ali Rind, Last updated: April 17, 2026, ref:

TL;DR. Most redaction platforms are built and tuned for English. Running non-English recordings through them produces unreliable output. Defensible multilingual redaction requires language-specific transcription accuracy, language-specific PII detection, and a structured evaluation process before committing to a vendor for global contact center work.

A global contact center produces audio in whatever language the customer speaks. For a multinational operation, that means Spanish in Mexico, Iberian Portuguese in Lisbon, Brazilian Portuguese in São Paulo, Japanese in Osaka, Korean in Seoul, Mandarin in Shanghai, French in Paris, German in Frankfurt. Compliance applies to all of it.

Redacting PII across that language mix is where most vendors fail. This guide walks through why non-English redaction is harder than English redaction, what good multilingual redaction actually requires, and how to evaluate a platform before committing to it.

Why Non-English Call Recordings Break Most Redaction Tools

English is overrepresented in every stage of the audio redaction pipeline. Training data, acoustic models, named entity recognition (NER) models, and product testing are all heavily weighted toward English. A vendor can deliver a reliable English redaction product without ever proving out any other language in depth.

The result is predictable. Non-English recordings run through those platforms produce transcripts with elevated word error rates, PII detection models that miss entities they would catch in English, and redaction output that looks clean on the surface but fails QA review. The gap is not always visible in a short demo on a clean recording. It shows up on real contact center audio at volume. Our primer on audio redaction software covers the end-to-end pipeline every non-English workload has to pass through, and VIDIZMO's longer audio redaction software guide explains why automated detection is the only viable approach at contact center scale.

Two specific issues make non-English call redaction harder than English.

The Two Bottlenecks: Transcription Accuracy and PII Detection Models

The redaction pipeline has two separable stages that both depend on language. Both have to work for the overall output to be reliable.

Transcription accuracy. The platform has to produce a text transcript of the spoken audio. Accuracy is measured in Word Error Rate (WER), which captures how many words are wrong, missing, or inserted. A WER of 5 means the transcript is very close to the original spoken content; a WER of 30 means nearly a third of the output is unreliable. WER varies dramatically by language, by accent, by audio quality, and by domain vocabulary. A platform that scores 5 WER on clean English interviews can score 25 WER on accented call center audio in a second language.

PII detection models. Once the transcript exists, a language-specific model identifies PII. Names, addresses, dates, numbers, identifiers. The model has to know what a French phone number looks like, what patterns indicate a Spanish DNI, how Japanese names are typically structured, what a German IBAN format is. A generic English NER model pointed at a Spanish transcript will miss much of the PII even if the transcript is accurate, because the model does not know what to look for. For a deeper look at how transcript-based detection drives redaction outcomes, see our walkthrough on PII redaction in audio transcripts.

Both bottlenecks have to be solved per language. A platform that lists a language as "supported" but only validates one of the two stages is not production-ready for that language.

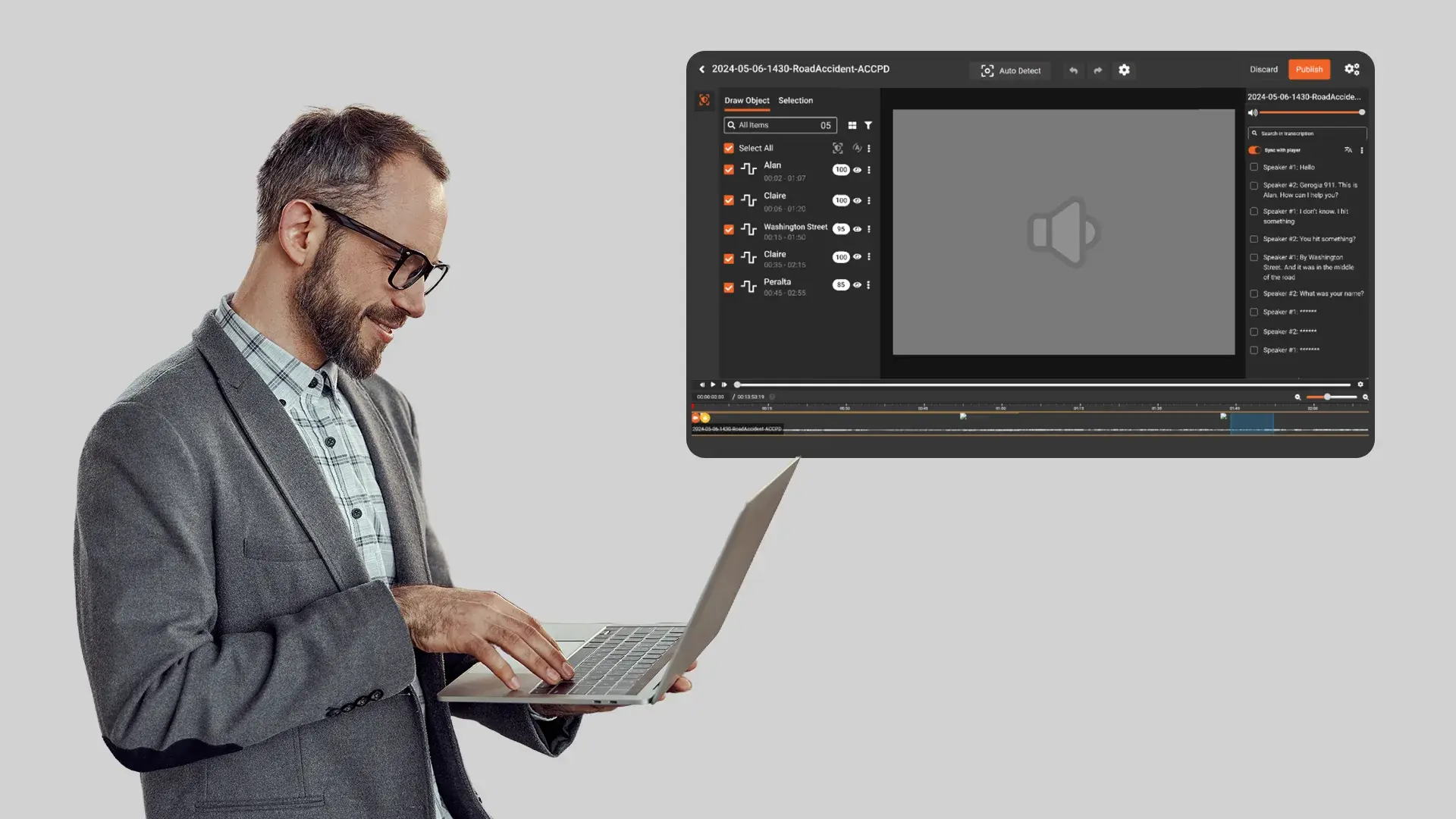

VIDIZMO Redactor provides transcription, translation, and closed captions across more than 40 transcription languages and over 50 translation languages, with validated Word Error Rates published per language. PII detection models operate over transcript output with language-aware entity rules, including country-specific identifier patterns for the US, UK, Canada, India, and the EU. The spoken PII redaction feature page details how detection integrates with audio output.

Redacting Spanish, French, German, and Italian Call Recordings

Major European languages sit at the favorable end of the multilingual redaction spectrum. Acoustic models are well-trained, NER models are mature, and the underlying PII conventions are well-understood.

Based on VIDIZMO's published Word Error Rate benchmarks, the four languages perform as follows: Spanish at 3.5 WER, Italian at 4.2, Portuguese at 4.8, German at 5.5, French at 7.7. These are in the same accuracy tier as English and can be expected to produce transcription output suitable for automated PII detection without heavy human review.

For PII, each language carries its own identifier conventions. Spanish recordings contain DNI and NIE numbers. French recordings contain INSEE numbers and SIRET identifiers. German recordings contain Steuer-ID and Versicherungsnummer. Italian recordings contain Codice Fiscale. A redaction platform needs to handle these alongside the universal categories (names, addresses, phone numbers, bank account identifiers, credit card numbers). For financial services teams operating across these markets, our guide on redaction software for financial services covers how PCI-DSS and GDPR intersect on cardholder data spoken across languages.

Operational approach for these languages mirrors English: run sampling first to confirm detection accuracy in your specific audio conditions, tune confidence thresholds, and route low-confidence detections to human review. The volume of human review needed is usually low for these language tiers.

Iberian vs Brazilian Portuguese: Why the Split Matters in Practice

Portuguese is two effectively distinct languages for transcription purposes. Iberian (European) Portuguese and Brazilian Portuguese differ in pronunciation, vocabulary, and common expressions. Transcription accuracy on one variant is not an automatic guarantee of accuracy on the other, even within the same platform.

The operational implication is concrete. If your contact center handles both Portugal and Brazil, sample recordings from both regions separately during evaluation. Measure WER on each. If the platform validates Portuguese as a single entry, treat that as unverified accuracy for one variant until your own sampling demonstrates otherwise.

Beyond transcription, PII conventions differ between the two markets. Brazil uses CPF (Cadastro de Pessoas Físicas) and CNPJ for individuals and businesses. Portugal uses NIF (Número de Identificação Fiscal). Addresses, phone formats, and postal codes follow different patterns. PII detection has to handle both sets of identifiers if both markets are in scope.

For regulatory context, Brazilian recordings fall under LGPD, which mirrors GDPR in most respects but has its own enforcement regime and penalties.

Redacting Korean, Japanese, and Chinese Call Recordings

East Asian languages present a different profile. Script systems, word segmentation, and tonal or pitch-based distinctions all make transcription materially harder than for European languages.

VIDIZMO's published benchmarks place Japanese at 6.4 WER, which is in the Excellent tier, while Korean (15.2 WER) and Chinese (19.6 WER) sit in the Good tier where transcription output is usable with review but not fully automatable. That tier difference is the operational reality: Korean and Chinese redaction pipelines should plan on more human review as a baseline, not less.

For PII detection, each language carries structural considerations. Japanese names have a variable given-name/family-name order depending on context, and the written form may use kanji, hiragana, or katakana, which affects NER behavior. Korean addresses and phone numbers follow patterns distinct from Chinese. Chinese names are short (typically two or three characters), which can produce higher false-positive rates because short character sequences match other content more easily.

Regulatory context for these markets: Japan has APPI, South Korea has PIPA, and China has PIPL. All three mandate handling and protection of personal data with specific rules that differ from GDPR, though the underlying redaction problem is similar.

Operational approach: run sampling at higher volume than for European languages, expect a higher QA review rate, and plan on a tighter feedback loop between detection output and threshold tuning. Speaker diarization helps here because identifying the customer versus the agent narrows the scope of what needs redacting, which compensates partly for lower raw detection accuracy. Bulk audio redaction workflows handle the queue management these higher-review-volume languages require.

Handling Code-Switching and Accented English

Real contact centers do not deliver clean, single-language recordings. A customer switches from English to Spanish mid-sentence. An agent handling the French market speaks French with a non-native accent. A Filipino agent handles an Australian customer on a US-English-tuned platform. Accents and language mixing are the norm, not the exception.

No platform handles every variant perfectly. The operational response is layered rather than technical.

Run samples that reflect actual audio conditions, including the accent and language-switching patterns your contact center produces. Do not test on clean native speaker audio if your production audio is mixed. Tune confidence thresholds lower for these recordings to catch more potential PII, and route a larger share to human QA review. For known high-mix content (a customer service line that routinely handles code-switching), plan on a hybrid workflow where transcription is automated but PII review is partially manual.

In evaluation, ask vendors for WER figures against accented samples, not just against native speaker benchmarks. The difference is often larger than the overall WER improvement a product release touts.

How to Evaluate Multilingual Redaction Before You Buy

A structured evaluation prevents surprises. The pattern that works:

Prepare representative samples. Pull 20 to 50 real call recordings per language from your actual contact center, not vendor-supplied demo files. Include mixed-quality recordings, accented speakers, and code-switching examples if those are part of your production mix.

Request per-language WER figures. Ask the vendor for their published WER per language. Compare against your samples after the pilot run. If the WER on your audio is materially worse than the published figure, understand why before committing.

Run the full pipeline end to end. Transcription alone is not enough. PII detection alone is not enough. The evaluation must run transcription through detection through redaction to output, because error accumulates across stages. The audio redaction services guide walks through the full pipeline in more detail.

Measure PII recall and precision per language. Recall is how much real PII was caught; precision is how much of what was flagged was actually PII. Both matter. High recall with low precision creates false positive fatigue for reviewers. Low recall with high precision leaves compliance gaps.

Validate identifier coverage. Confirm that country-specific identifiers for each of your markets are covered. If you handle Brazil, confirm CPF and CNPJ detection. If you handle Spain, confirm DNI and NIE. If you handle Japan, confirm My Number. Missing identifier coverage is a gap that will surface during real-world operation.

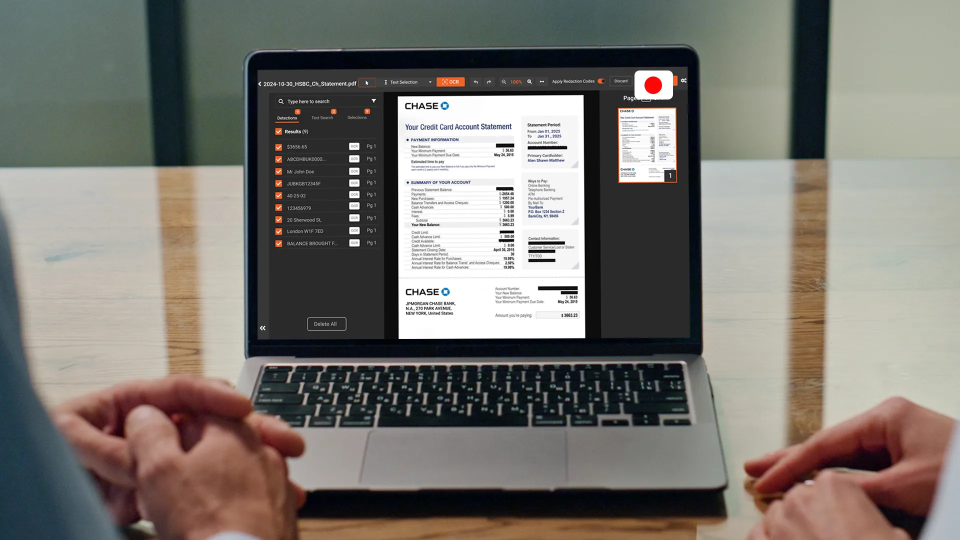

Require QA and audit documentation. The platform should produce an audit trail per redaction event with user ID, timestamp, detection confidence, and action type, suitable for a compliance review. This is a table-stakes requirement, not an advanced feature. For a broader view of how audit-ready workflows and analyst review combine, see the AI audio redaction software feature page.

Pulling It Together: Global Contact Center Redaction

A production multilingual redaction program has three things working at once. The right platform, with verified per-language accuracy. The right deployment, so data residency and regulatory scope are handled correctly across markets. And the right operating model, where human QA scales with language difficulty rather than being a fixed percentage across the board.

Most enterprises get the platform and deployment right and then underinvest in the operating model. The result is a program that works on clean English audio and silently degrades on everything else. The fix is not a better product release; it is staffing the QA layer appropriately and tuning confidence thresholds per language rather than globally.

Ready to evaluate multilingual call recording redaction?

Request a multilingual redaction pilot with real audio from your contact center.

People Also Ask

VIDIZMO Redactor supports more than 40 languages for transcription and over 50 for translation, with validated Word Error Rate benchmarks published per language. Country-specific PII identifier patterns are handled for major markets including the US, UK, Canada, India, and the EU. The practical supported set for production-grade redaction is the subset of those languages where both transcription accuracy and PII detection have been validated on real contact center audio.

Word Error Rate (WER) measures how many words in a transcript are wrong, missing, or inserted compared to the original spoken audio. It matters for redaction because PII detection runs on the transcript, not the audio directly. If the transcript has a high WER, the detection stage has less reliable input, which means more PII gets missed or mis-flagged. Validating per-language WER on your own samples is a baseline evaluation step.

Yes, meaningfully. The two variants differ in pronunciation, vocabulary, and regional expressions, and transcription accuracy on one is not an automatic guarantee of accuracy on the other. PII conventions also differ: Brazil uses CPF and CNPJ, Portugal uses NIF. Evaluate both variants separately during a pilot if your contact center handles both markets.

Code-switching is common in real contact centers and no platform handles it perfectly on automated output alone. The practical approach is to tune confidence thresholds lower for these recordings, route a larger share to human QA review, and use speaker diarization to narrow redaction scope to customer-side audio where applicable. For known high-mix content, plan on a hybrid workflow rather than expecting full automation.

Pull 20 to 50 real call recordings per language from your actual production audio. Run the full pipeline from ingest to redacted output, not just transcription. Measure WER, PII recall, and PII precision per language. Validate country-specific identifier coverage for each market. Confirm the audit trail meets your compliance requirements. If per-language results diverge materially from vendor-published benchmarks, understand why before committing.

About the Author

Ali Rind

Ali Rind is a Product Marketing Executive at VIDIZMO, where he focuses on digital evidence management, AI redaction, and enterprise video technology. He closely follows how law enforcement agencies, public safety organizations, and government bodies manage and act on video evidence, translating those insights into clear, practical content. Ali writes across Digital Evidence Management System, Redactor, and Intelligence Hub products, covering everything from compliance challenges to real-world deployment across federal, state, and commercial markets.

Jump to

You May Also Like

These Related Stories

Audio Redaction Services for Call Centers and Legal Teams

PCI-DSS Video Redaction: Remove Payment Card Data from Recorded Videos

No Comments Yet

Let us know what you think