On-Premises Redaction Software: Requirements & Deployment Checklist

by Ali Rind, Last updated: March 18, 2026, ref:

Organizations handling sensitive video, audio, and document data often need redaction software that stays entirely within their own infrastructure. Whether driven by CJIS compliance, air-gapped security mandates, or data sovereignty policies, an on-premises redaction deployment demands careful infrastructure planning.

This guide covers the on-premises redaction software requirements you need to evaluate, from server specs and storage estimation to network design and a step-by-step deployment checklist.

Why On-Premises Deployment for Redaction Software?

Not every organization can route sensitive body camera footage, medical records, or classified documents through a shared cloud. On-premises deployment gives IT teams full control over security, performance, configuration, and data management. It also enables operation in air-gapped environments with zero external network access, a hard requirement for defense, intelligence, and classified networks.

For agencies bound by CJIS, HIPAA, or FOIA compliance, on-prem deployment ensures that all AI processing runs locally. No data leaves the facility. Combined with AES-256 encryption, role-based access control (RBAC), and chain-of-custody logging, this model satisfies the strictest audit requirements.

Server Architecture: Components You Need

A production on-premises redaction deployment is not a single server. It is a modular architecture where each server handles a specific workload. Here is what a typical deployment includes:

Core Application Server

The Redaction Application Server (RAP) hosts the web application, user interface, workflow engine, and API layer. This is the central hub users interact with for manual and automated redaction tasks.

AI Processing Servers

AI-powered redaction, including face detection, license plate tracking, object recognition, and PII detection, requires dedicated processing capacity. Depending on the workload, you may need one or more of these:

- Object Detection / Computer Vision (OCV) Server: Runs visual AI models for face, person, license plate, vehicle, and custom object detection across video and image frames

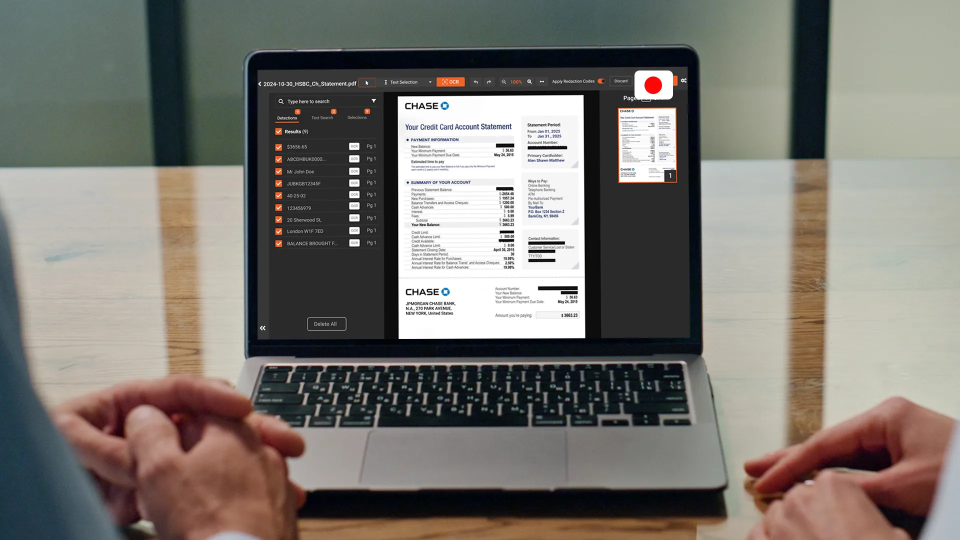

- PII Detection Server: Identifies 40+ PII types across documents, audio transcripts, and metadata using NLP and contextual AI

- OCR Server: Optical character recognition for scanned documents, handwritten text (ICR), and Perso-Arabic scripts

- Content Processing Server (CPS): Handles transcoding, format conversion, and content ingestion across 255+ file formats

Transcription and Audio Analysis

The Multilingual Transcription/Audio Analysis (TAA) Server handles speech-to-text in 82 languages, speaker diarization, audio PII detection, and translation into 74 languages.

Optional AI Servers

- AI Search Server (AIS): Powers intelligent search across metadata, transcripts, and AI-detected content

- Agentic AI Server (AGS): Supports advanced AI workflows and agentic processing

- AI Embedding Server (EBD): Generates embeddings for semantic search and content similarity

Server Sizing: Minimum and Recommended Specifications

Server sizing depends on your redaction volume, the AI models you enable, and your concurrency requirements. The following guidelines apply broadly to enterprise redaction deployments:

Compute (CPU and GPU)

- CPU: Multi-core processors (16+ cores recommended per AI server). AI workloads, especially object detection and video redaction, are compute-intensive.

- GPU: Required for the highest-accuracy AI models. VIDIZMO Redactor offers three AI model sizes: Small (CPU-suitable), Medium (balanced), and Large (GPU-intensive, highest accuracy). If you plan to run Large models for face or object detection, provision NVIDIA GPUs with CUDA support.

- All AI processing runs server-side. No client-side GPU is required; users access the system through a standard web browser.

Memory (RAM)

- Minimum: 32 GB per server for moderate workloads

- Recommended: 64–128 GB per AI processing server for concurrent video redaction pipelines

- Higher memory allocations reduce disk I/O during large batch operations

Operating System Compatibility

- Windows Server 2016 or later

- Linux (Ubuntu, CentOS/RHEL) for containerized deployments

- Docker and Kubernetes supported for consistent deployment across environments

Storage Estimation by File Volume and Format

Storage is often the most underestimated component. Redaction workflows generate multiple derivatives: original files, redacted copies, transcripts, audit logs, and AI model outputs.

Estimation Framework

-4.png?width=1262&height=582&name=image%20(3)-4.png)

Capacity Planning Example

An agency processing 500 hours of body camera footage and 50,000 document pages per month should plan for:

- Video: 500 hrs x 4 GB/hr x 2.5 = ~5 TB/month

- Documents: 50,000 pages x 5 MB x 1.5 = ~375 GB/month

- Total: ~5.4 TB/month of active storage (before archival or retention policies)

Use SAN or NAS with redundancy (RAID 10 or equivalent) for production storage. Consider tiered storage: fast SSD for active processing, HDD or cold storage for archived evidence.

Network Requirements

Bandwidth

- Internal LAN: 10 Gbps recommended between application servers and storage. Video files are large, and AI processing pipelines move data frequently between components.

- User access: 1 Gbps is adequate for browser-based access. The split-screen comparison view streams both original and redacted video simultaneously.

Network Segmentation

- Isolate redaction servers on a dedicated VLAN for security

- Restrict inbound/outbound traffic to necessary ports only

- For air-gapped deployments, ensure complete network isolation with no external connectivity

Load Balancing

For high-volume environments processing thousands of files daily, deploy a load balancer in front of the application tier. Queue-based processing, tested at 1.1M+ recordings, distributes AI workloads across available servers automatically.

Database Dependencies

- SQL Server or PostgreSQL for application metadata, user management, audit logs, and workflow state

- Separate database instances for production and reporting recommended at scale

- Plan for database storage growth proportional to file volume; each processed file generates metadata, redaction logs, and chain-of-custody records

Scalability Considerations for High-Volume Environments

Horizontal Scaling

Add more AI processing servers as volume grows. The modular architecture means you can scale object detection capacity independently from OCR or transcription capacity. For teams managing large volumes of recordings, batch video redaction workflows help maximize throughput across parallel server instances.

Batch Processing and Queue Automation

VIDIZMO Redactor supports queue-based overnight automation. Submit bulk files for sequential unattended processing during off-hours to maximize hardware utilization without adding servers. This is particularly useful for agencies handling high-volume FOIA and public records requests.

Processing Unit Allocation

Each on-premises server includes an annual allocation of Processing Units (PUs). For example, a Content Processing Server includes 60,000–100,000 PUs annually. Monitor PU consumption against your workload to right-size your server fleet.

On-Premises Deployment Checklist

Use this checklist to track readiness before installation begins:

Infrastructure Readiness

- Provision application server (RAP) meeting compute and memory requirements

- Provision AI processing servers based on workload profile (OCV, PII, OCR, CPS, TAA)

- Allocate storage with appropriate redundancy and tiered architecture

- Configure network with 10 Gbps internal bandwidth and proper segmentation

- Set up database server (SQL Server or PostgreSQL) with backup policies

- Provision GPU hardware if running Large AI models

Security Configuration

- Enable AES-256 encryption at rest on all storage volumes

- Configure TLS encryption for all internal server communication

- Set up SSO integration (Azure AD, Okta, SAML 2.0, OAuth 2.0, or OpenID Connect)

- Configure RBAC policies aligned with organizational roles

- Enable MFA for all administrative accounts

- Establish chain-of-custody logging and audit trail retention policies

Application Setup

- Install Redaction Application Server and verify web UI accessibility

- Deploy and configure each AI server component

- Validate connectivity between application server, AI servers, storage, and database

- Configure watch folder monitoring via the Desktop Application for automated bulk upload

- Set up queue-based processing rules and batch automation schedules

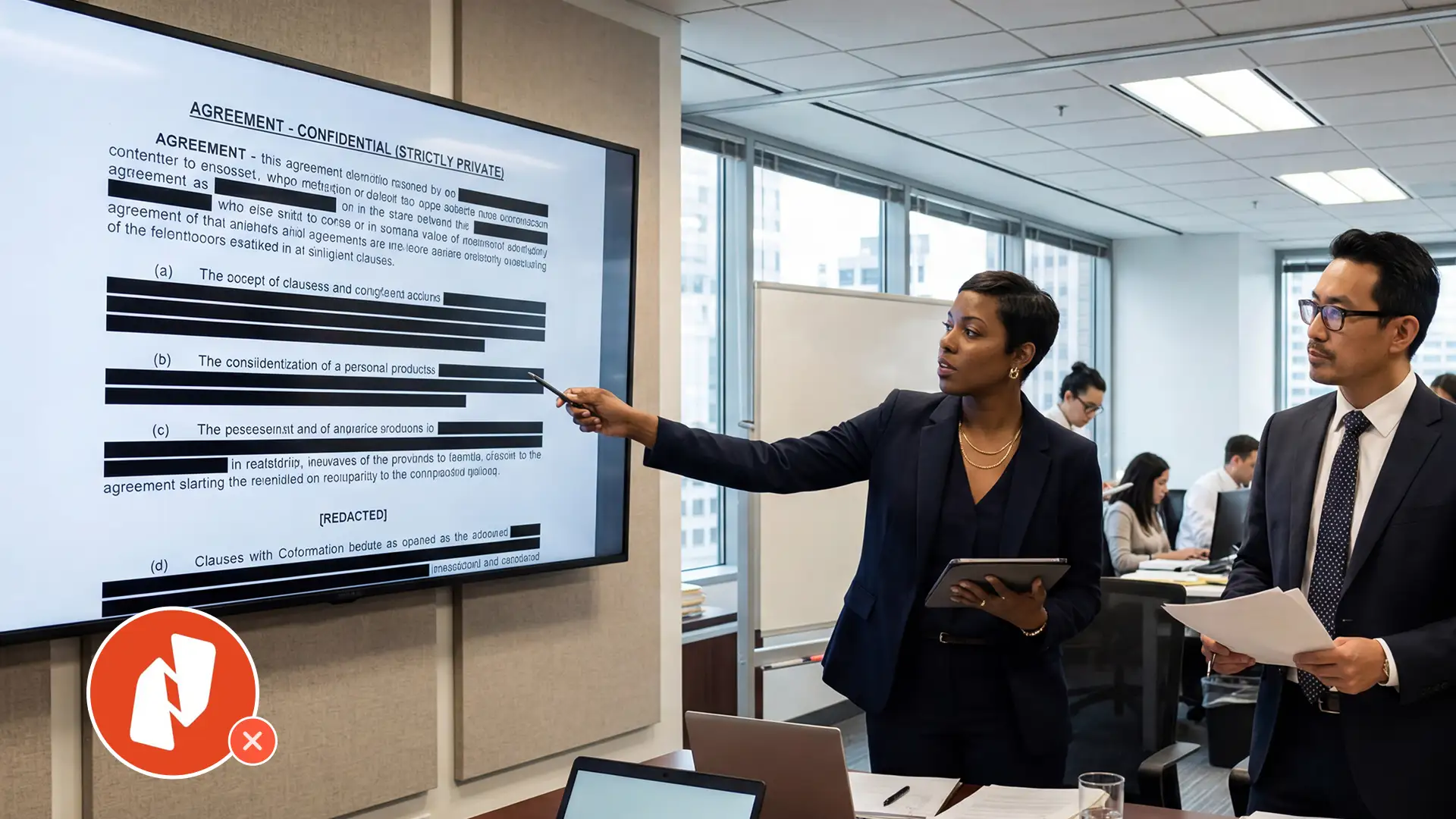

- Define redaction templates and exemption code libraries (FOIA Exemptions 1–9, state-specific codes)

Integration and Testing

- Configure REST API and WebHook API integrations with existing systems

- Test end-to-end redaction workflow: upload, AI detection, review, redact, export

- Validate audit logs capture all user actions with timestamps and IP addresses

- Run a load test with representative file volumes to confirm sizing

- Verify redacted copy generation preserves originals untouched

Plan Your On-Premises Redaction Deployment

Need help sizing your infrastructure? Contact us to get a tailored deployment plan for your environment.

Key Takeaways

- On-premises redaction software deployment uses a modular server architecture: application, AI processing, transcription, and content processing are separate components that scale independently.

- GPU provisioning is only required for the highest-accuracy AI models. Smaller models run on CPU, giving flexibility to match hardware investment to accuracy needs.

- Storage planning must account for 1.5–2.5x multipliers over raw file sizes due to redacted copies, transcripts, and audit records.

- Network segmentation and encryption are non-negotiable for compliance with CJIS, HIPAA, and classified environment requirements.

- Queue-based batch processing, tested at 1.1M+ recordings, maximizes hardware utilization without requiring additional servers.

People Also Ask

At minimum, you need a multi-core application server with 32 GB RAM, dedicated AI processing servers for the detection types you need (faces, PII, OCR), a database server, and redundant storage. GPU is recommended for large AI model workloads but not required for smaller models.

Yes. On-premises redaction platforms like VIDIZMO Redactor support fully air-gapped deployment with zero external network connectivity. All AI processing, including object detection, PII identification, OCR, and transcription, runs entirely on local servers.

Plan for approximately 2.5x the raw video file size. A one-hour body camera video at 1080p (3–5 GB) generates an original, a redacted copy, and transcript data, totaling roughly 7.5–12.5 GB per hour of footage.

It depends on accuracy requirements. VIDIZMO Redactor offers Small, Medium, and Large AI model sizes. Small and Medium models run on CPU. The Large model, which delivers the highest detection accuracy, requires NVIDIA GPUs with CUDA support.

Most enterprise redaction platforms require SQL Server or PostgreSQL for storing metadata, user records, audit trails, and workflow state. Plan database storage to grow proportionally with your file processing volume.

Through horizontal scaling: add more AI processing servers as volume grows. The modular architecture lets you scale object detection, OCR, and transcription capacity independently. Queue-based automation supports unattended overnight batch processing across all servers.

Plan Your On-Premises Redaction Infrastructure With Confidence

Deploying redaction software on-premises is an infrastructure decision that directly impacts your compliance posture, processing throughput, and long-term operational costs. The modular architecture means you can start with the servers your current workload demands and scale as volume grows, without rearchitecting the deployment.

About the Author

Ali Rind

Ali Rind is a Product Marketing Executive at VIDIZMO, where he focuses on digital evidence management, AI redaction, and enterprise video technology. He closely follows how law enforcement agencies, public safety organizations, and government bodies manage and act on video evidence, translating those insights into clear, practical content. Ali writes across Digital Evidence Management System, Redactor, and Intelligence Hub products, covering everything from compliance challenges to real-world deployment across federal, state, and commercial markets.

Jump to

You May Also Like

These Related Stories

PCI-DSS Video Redaction: Remove Payment Card Data from Recorded Videos

Replacing Nitro PDF Pro for High-Volume Legal Redaction

No Comments Yet

Let us know what you think